Imagine standing in a dimly lit cathedral, raising your smartphone, and watching as it instantly maps every archway, pillar, and shadow in three-dimensional space. This isn’t magic—it’s the result of 3D depth cameras, a technology that has quietly revolutionized how our devices see and understand the world around us.

Unlike traditional cameras that capture flat images, depth cameras measure the distance between the lens and every point in a scene, creating a detailed three-dimensional map. You’ve likely experienced this technology firsthand: when your phone’s portrait mode blurs the background with uncanny precision, when Face ID unlocks your device in complete darkness, or when augmented reality apps seamlessly place virtual furniture in your living room.

The secret behind these capabilities lies in sophisticated sensor technology, particularly Deep Trench Isolation (DTI). This innovation addresses a critical problem that plagued earlier depth sensors: crosstalk. When infrared light bounces back from a subject, it needs to hit the correct pixel on the sensor. Without proper isolation, light scatters between neighboring pixels, creating fuzzy, inaccurate depth maps. DTI solves this by etching microscopic trenches between pixels, effectively creating barriers that keep light precisely where it belongs.

For photographers and tech enthusiasts, understanding how depth cameras work isn’t just academic curiosity. It explains why your latest smartphone captures dramatically better portraits than your previous model, why certain devices excel at low-light depth sensing, and what to look for when evaluating cameras for professional computational photography work.

What Exactly Are 3D Depth Cameras?

You’ve probably been using 3D depth cameras without even realizing it. Every time you snap a portrait photo with that beautifully blurred background, activate an AR filter that perfectly maps to your face, or unlock your phone with facial recognition, there’s a good chance a depth camera is working behind the scenes. These clever pieces of technology have quietly become part of our daily lives, appearing in smartphones, tablets, security systems, and even robot vacuums navigating around your furniture.

So what makes a depth camera different from a regular camera? While traditional cameras capture a flat, two-dimensional image, depth cameras can measure the distance between the camera and objects in the scene. Think of it as giving your camera a sense of spatial awareness, allowing it to understand not just what things look like, but how far away they are. This three-dimensional understanding opens up possibilities that go far beyond standard photography.

There are three main technologies that make this depth perception possible. Time-of-Flight cameras, or ToF, work by sending out pulses of infrared light and measuring how long they take to bounce back, similar to how bats use echolocation. Structured light systems project a pattern of dots or lines onto a scene and analyze how that pattern deforms over different surfaces and distances. Finally, stereo vision cameras use two lenses positioned slightly apart, much like our own eyes, to calculate depth by comparing the slight differences between their two viewpoints.

This is all part of the broader evolution in advanced camera sensor technology that’s transforming how we capture images.

Why does depth accuracy matter for your photography? The answer is simple: better depth data means better results. When your camera knows precisely how far away your subject is compared to the background, it can create more convincing bokeh effects, more accurate subject separation in portrait mode, and more realistic augmented reality experiences. Poor depth measurement leads to those frustrating moments where the blur effect cuts through your subject’s hair or leaves random background objects unnaturally sharp. As these cameras continue improving, the line between computational photography and traditional depth-of-field techniques becomes increasingly blurred, giving photographers powerful new creative tools.

The Hidden Enemy: Crosstalk in Camera Sensors

Why Crosstalk Gets Worse in Depth Sensors

If you’ve ever noticed strange halos around subjects in portrait mode or frustrating inaccuracies when your smartphone tries to separate foreground from background, you’ve experienced crosstalk in action. But why are 3D depth cameras particularly vulnerable to this issue?

The answer lies in how these sensors work. Unlike traditional cameras that simply capture visible light, depth sensors rely on infrared light patterns. They project IR light onto a scene and measure how long it takes to return, or they analyze patterns of structured light. The challenge? Infrared light behaves differently than visible light, often scattering and reflecting in ways that confuse the sensor.

Here’s where things get tricky. Modern depth sensors pack millions of tiny pixels into compact spaces. We’re talking about pixels measured in micrometers, crammed together to deliver the detail we expect. When one pixel receives its signal, that light can easily “leak” into neighboring pixels, creating what engineers call crosstalk. Think of it like trying to have a private conversation in a crowded room where everyone’s standing shoulder to shoulder.

The precision required makes matters worse. Depth calculations depend on extremely accurate measurements. Even a small amount of stray light bleeding into the wrong pixel can throw off depth readings by centimeters or more. That’s the difference between a beautifully blurred background and an awkward cutout effect where your subject’s hair merges unnaturally with the scenery behind them.

You might have noticed this when photographing subjects with fine details like hair, glasses, or fence lines. Those frustrating artifacts where the background blur seems to “eat into” your subject? That’s crosstalk compromising the sensor’s ability to accurately map where one surface ends and another begins.

Enter Deep Trench Isolation: The Pixel Bodyguard

How Deep Trench Isolation Actually Works

Think of your camera sensor as a bustling apartment building, where each pixel is a separate unit trying to collect light independently. The problem? Without proper walls between units, signals can bleed from one pixel into its neighbors—a phenomenon called crosstalk that muddles depth information and creates inaccurate readings.

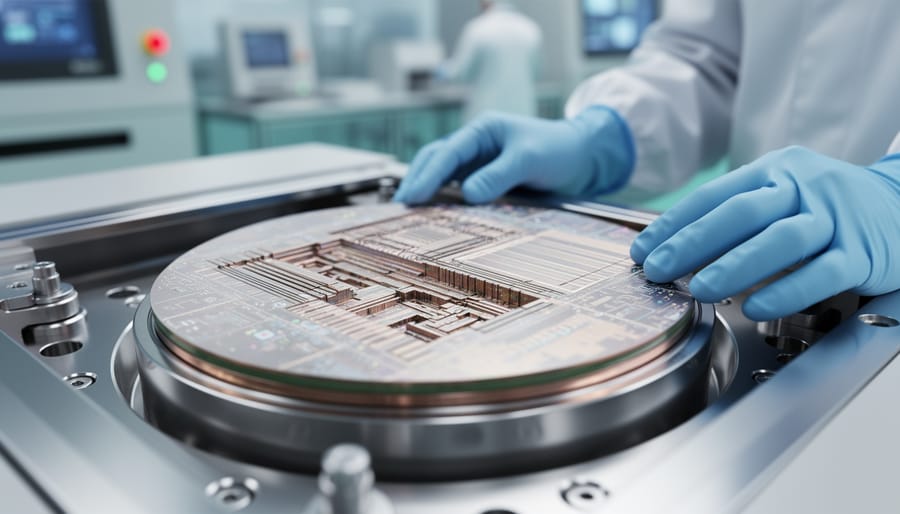

Deep Trench Isolation solves this by constructing ultra-precise barriers between pixels, but here’s where the “deep” part becomes crucial. Imagine digging narrow trenches that go all the way through your building’s foundation, not just between floors. These trenches—typically just a few micrometers wide but extending through the entire silicon substrate—are then filled with insulating material, usually silicon dioxide.

Why go so deep? Traditional isolation techniques only created shallow barriers, like putting up partial walls between apartments. Light and electrical signals could still find ways around these incomplete boundaries, especially at angles. DTI’s full-depth approach is like building solid concrete walls from the basement to the roof, ensuring complete separation.

The manufacturing process is remarkably precise. Engineers etch these incredibly narrow trenches into the silicon wafer using specialized etching techniques, then carefully fill them with insulating material. The result is a honeycomb-like structure where each pixel sits in its own isolated chamber, surrounded on all sides by insulating walls.

For depth cameras specifically, this isolation is transformative. When your camera shoots infrared patterns to calculate distance, each pixel needs to accurately record the reflected light without contamination from neighboring pixels. DTI ensures that a bright reflection hitting one pixel doesn’t leak into adjacent pixels, which would throw off distance calculations and create those frustrating halos or inaccurate depth maps you might have noticed in older smartphone portrait modes.

The practical benefit? Cleaner depth separation, more accurate subject isolation, and better performance in challenging lighting conditions where precise pixel-level accuracy makes all the difference.

DTI vs. Traditional Pixel Separation Methods

Before Deep Trench Isolation came along, camera manufacturers relied on different methods to prevent pixel crosstalk, and the difference in performance is striking. Traditional photodiode designs used shallow trench isolation (STI), which created barriers between pixels using relatively shallow grooves filled with insulating material. Think of it like building short fences between neighbors’ yards—they provide some separation, but things can still spill over pretty easily.

The problem with STI becomes especially apparent in depth-sensing applications. When infrared light hits the sensor at an angle (which happens constantly in 3D depth cameras), photons would frequently bounce into adjacent pixels. This contamination could result in crosstalk levels of 10-15% or higher in traditional designs, meaning that up to 15% of the light signal might end up in the wrong pixel. For depth cameras, this translates directly to inaccurate distance measurements and fuzzy edges in depth maps.

Deep Trench Isolation takes a fundamentally different approach by digging much deeper barriers between pixels—sometimes extending through the entire silicon layer. Real-world testing shows DTI can reduce crosstalk to below 3%, representing roughly an 80% improvement over shallow trench methods. This isn’t just a theoretical win on paper; photographers using devices with DTI-equipped depth cameras notice sharper subject separation in portrait mode, more accurate background blur, and better performance when capturing depth information in challenging lighting conditions.

The practical benefit becomes obvious when you’re shooting a portrait outdoors at golden hour. Those stray light rays hitting your sensor at extreme angles would have caused edge bleed in older cameras, making hair and fine details look artificially processed. DTI-based sensors handle these scenarios much more gracefully, contributing to the broader wave of sensor technology improvements we’ve seen in recent years.

What This Means for Your Photography

Devices Already Using Deep Trench Isolation

Deep Trench Isolation has steadily made its way from cutting-edge research labs into the cameras and smartphones you might already own. Apple’s iPhone lineup has been at the forefront of implementing DTI in their front-facing TrueDepth camera systems, starting with the iPhone X in 2017. These cameras power Face ID and enable Portrait mode selfies with impressively accurate edge detection, even separating individual strands of hair from the background—a feat that wouldn’t be possible without the reduced crosstalk DTI provides.

On the Android side, several flagship devices from Samsung, Google, and Huawei have incorporated DTI-enabled depth sensors in their multi-camera arrays. The Samsung Galaxy S21 and newer models, for instance, use this technology in their time-of-flight sensors to improve depth mapping for both photography and augmented reality applications.

For dedicated cameras, the technology is becoming standard in newer mirrorless models with advanced autofocus systems. Canon’s Dual Pixel CMOS AF II, found in cameras like the EOS R5 and R6, benefits from isolation techniques that improve phase-detection accuracy across nearly the entire frame.

Even some action cameras and drone-mounted cameras now incorporate depth-sensing capabilities with DTI technology, enabling features like automatic subject tracking and obstacle avoidance. If you’ve purchased a premium smartphone or mirrorless camera in the past three years, there’s a good chance you’re already benefiting from Deep Trench Isolation, even if the marketing materials don’t explicitly mention it by name.

When DTI Actually Matters (And When It Doesn’t)

Here’s the reality: DTI technology makes a measurable difference in specific shooting scenarios, but it’s not a game-changer for everyone. Understanding when it matters will save you from overspending on features you might not actually use.

DTI shines brightest in challenging lighting conditions. If you frequently shoot high-contrast scenes—think backlit portraits, sunset silhouettes, or indoor photography with bright windows—the improved sensor performance becomes noticeable. The reduction in crosstalk means cleaner depth data when your camera is working overtime to process extreme brightness variations. Similarly, photographers capturing fast-moving subjects in mixed lighting, like wedding photojournalists or sports shooters, will appreciate the more accurate depth detection that helps with subject tracking and autofocus reliability.

Low-light photography is another area where DTI proves its worth. When you’re pushing your ISO settings and squeezing every photon from dim environments, the reduced electrical interference between pixels helps maintain better depth accuracy. This translates to more reliable autofocus performance and cleaner background separation in portrait mode.

However, if you primarily shoot in controlled studio environments with consistent lighting, or if you’re mainly capturing landscapes in good daylight, you probably won’t notice dramatic differences. The improvement exists, but it’s subtle enough that most photographers wouldn’t spot it in their final images.

The bottom line? DTI is genuinely beneficial for professionals and serious enthusiasts who regularly encounter difficult lighting situations. For casual photographers who shoot primarily in favorable conditions, it’s a nice-to-have rather than a must-have feature.

The Future of 3D Depth Sensing

The evolution of 3D depth sensing is accelerating, and photographers stand to benefit tremendously from what’s coming next. Sensor manufacturers are pushing boundaries with smaller pixels that maintain excellent infrared sensitivity, while advances in computational photography are creating hybrid systems that merge traditional imaging with sophisticated depth mapping.

We’re already seeing professional applications emerge that seemed like science fiction just a few years ago. Professional 3D scanning for product photography and preservation work is becoming accessible to smaller studios. Instead of expensive dedicated equipment, photographers can now capture detailed three-dimensional models using camera systems they already own. Museums are using this technology to create digital archives of artifacts, while commercial photographers are producing interactive product views that let customers examine items from every angle.

Volumetric video represents another frontier. This technique captures subjects in full 3D, allowing viewers to move around the scene from different perspectives. While currently demanding specialized setups, the integration of 3D sensor technology into mainstream cameras suggests this could become widely available within a few years.

Even traditional photography workflows are evolving. Advanced focus stacking benefits enormously from precise depth data, allowing software to intelligently blend multiple exposures based on actual distance measurements rather than guesswork. This means better macro photography, sharper landscape images, and more creative control over depth of field in post-processing.

The practical takeaway? Depth sensing isn’t just about autofocus anymore. It’s becoming a fundamental tool that expands what’s possible behind the lens, opening creative opportunities we’re only beginning to explore.

Deep Trench Isolation might sound like something only sensor engineers need to worry about, but this behind-the-scenes technology explains why today’s 3D depth cameras deliver such dramatically improved results compared to models from just a few years ago. By eliminating electrical crosstalk between pixels, DTI has quietly revolutionized how accurately cameras can capture depth information, leading to cleaner background blur, more precise subject isolation, and better performance in challenging lighting conditions.

The good news? You don’t need to understand the engineering details to benefit from this advancement. When shopping for new gear with depth-sensing capabilities, look for cameras released in the past two to three years, as most manufacturers have adopted DTI technology in their latest sensors. Pay attention to real-world depth map quality in reviews, and test portrait mode performance in various lighting conditions if possible.

What’s exciting is how sensor innovations like DTI expand creative possibilities. Better depth data means more reliable computational photography features, opening doors for techniques that were previously inconsistent or unavailable. As sensor technology continues advancing, the gap between what we envision and what our cameras can capture keeps narrowing, putting more creative control directly in your hands.